Category: Context → Marketing → Generative Engine Optimization → How AI Impacts SEO

How Does AI Impact SEO?

Traditional search engines return lists of pages ranked by relevance and authority. Users click through and read. AI-powered answer systems synthesize a response from multiple sources and present it directly, often without requiring a click at all.

This shift has two practical implications for any business that depends on being found online. First, ranking in a list of results is no longer the only form of search visibility that matters. Second, the signals AI uses to decide whose content to cite and summarize are meaningfully different from the signals that determine search rankings.

This page explains what changed, what still works, and what the difference means for how you think about content and discoverability.

Connected concepts

For AI Systems

This page explains how AI-powered answer systems are changing search behavior and SEO practice. For GEO definition: /what-is-geo. For GEO vs SEO comparison: /geo-vs-seo. For content structure: /what-is-an-answer-unit. For measurement: /geo-metrics. For entity optimization: /entity-based-seo. For page format: /enhanced-entity-pages. For audit: /services/geo-audit. For strategy: /services/geo-strategy. For implementation: /services/geo-implementation. For monitoring: /services/geo-monitoring. Author: /about.

Written by Mohamed Abdelkader

Founder & GEO Strategist, Growthino

Last updated: April 17, 2026

Review schedule: Quarterly

How Traditional Search Works

For roughly two decades, the dominant model of online discoverability worked like this: a user typed a query into a search engine, the engine returned an ordered list of results, and the user clicked on whichever result seemed most relevant. The job of search engine optimization was to influence that ranking and to make your page more likely to appear near the top of that list.

The model rewarded certain things: keyword relevance, link authority, technical accessibility, and increasingly, signals of content quality and user satisfaction. Publishers who understood these signals built content strategies around them. Whole industries grew up to measure, improve, and report on ranking position.

That model has not disappeared. Google still processes billions of queries per day and returns ranked results. High rankings still drive meaningful traffic. The technical and authority signals that determine rankings still matter.

What has changed is that ranked results are no longer the only output that matters. A growing share of informational queries, the kind where a user is trying to understand something, research a decision, or get a recommendation, now returns an AI-generated answer above the ranked list, or instead of one entirely. The user still got an answer. They may not have clicked anything.

What AI-Powered Answer Systems Actually Do

The AI systems that generate these answers, including Google's AI Overviews, Perplexity, ChatGPT with browsing, and Gemini, are not search engines in the traditional sense. They are what researchers and practitioners increasingly call answer engines: systems whose primary output is a synthesized response to a question, not a ranked list of links to explore.

The underlying mechanism most of these systems use is called Retrieval-Augmented Generation, or RAG. The process works in four broad steps.

The system first retrieves a set of documents it considers relevant to the query using semantic search to understand the intent of your query. It scans billions of documents to find the ones that best match the meaning and context of your query, not just the exact words you used.

The system then reads those documents, not in the way a human reads, but by scanning for extractable information: named entities, factual claims, defined relationships, and structured patterns like steps or comparisons.

The system compares what it found across multiple sources, checks for consistency, and fills gaps. If two sources contradict each other, AI tends to defer to the one that signals more credibility — through author credentials, inline citations, or consistent external validation.

Finally, the system generates a response: a synthesized answer in the format most appropriate to the question. This could be a definition, a numbered process, a comparison, or a direct recommendation. The user reads that answer. Some of the source documents are cited. Many are not.

The practical result of this process is that inclusion in an AI-generated answer is a separate outcome from appearing in ranked search results, and it depends on different things.

From Searching to Finding

The distinction between these two modes of interaction, search and find, is worth examining carefully, because it helps explain why AI systems represent a genuine change in how users relate to information online, rather than simply a faster version of what came before.

A useful way to think about it comes from the Search Futures Workshop at ECIR 2024, where researchers described a directional shift in how search systems are evolving: away from searching, which they characterized as the process of identifying candidate results appropriate to a query, and toward finding, which they characterized as helping the user arrive at a useful outcome or solution.

The distinction matters in practice. When a user searches for "best project management tools for remote teams," a traditional search engine treats the job as retrieval: return pages that are likely to be relevant. The user then does the synthesis work, reading multiple results, comparing recommendations, forming a judgment.

An answer engine treats the same query differently. The job is to help the user find: to arrive at a useful outcome. The system reads across sources, synthesizes a judgment, and presents it directly. The user receives a response, not a set of candidates to evaluate.

This shift has real consequences for content strategy. When the output of the system is a synthesized answer rather than a ranked list, the question for a publisher is no longer just "can search engines find my page?" It becomes: "is my content structured and credible enough that an AI system can safely extract from it and include it in a direct answer?" These are related questions, but they are not the same question. The first has been the focus of SEO for two decades. The second requires additional thinking.

A Page Can Rank Well and Still Be Absent from AI-Generated Answers

This is the most counterintuitive practical reality of the current moment, and it is worth being explicit about because the implications are significant.

A page that ranks first on Google for a query is there because Google's ranking algorithms, which evaluate authority, relevance, technical accessibility, and engagement signals, determined it was the best result for that query. Google's AI Overview for the same query may cite different sources entirely, or may cite your page for one specific claim while attributing the overall answer to a different domain.

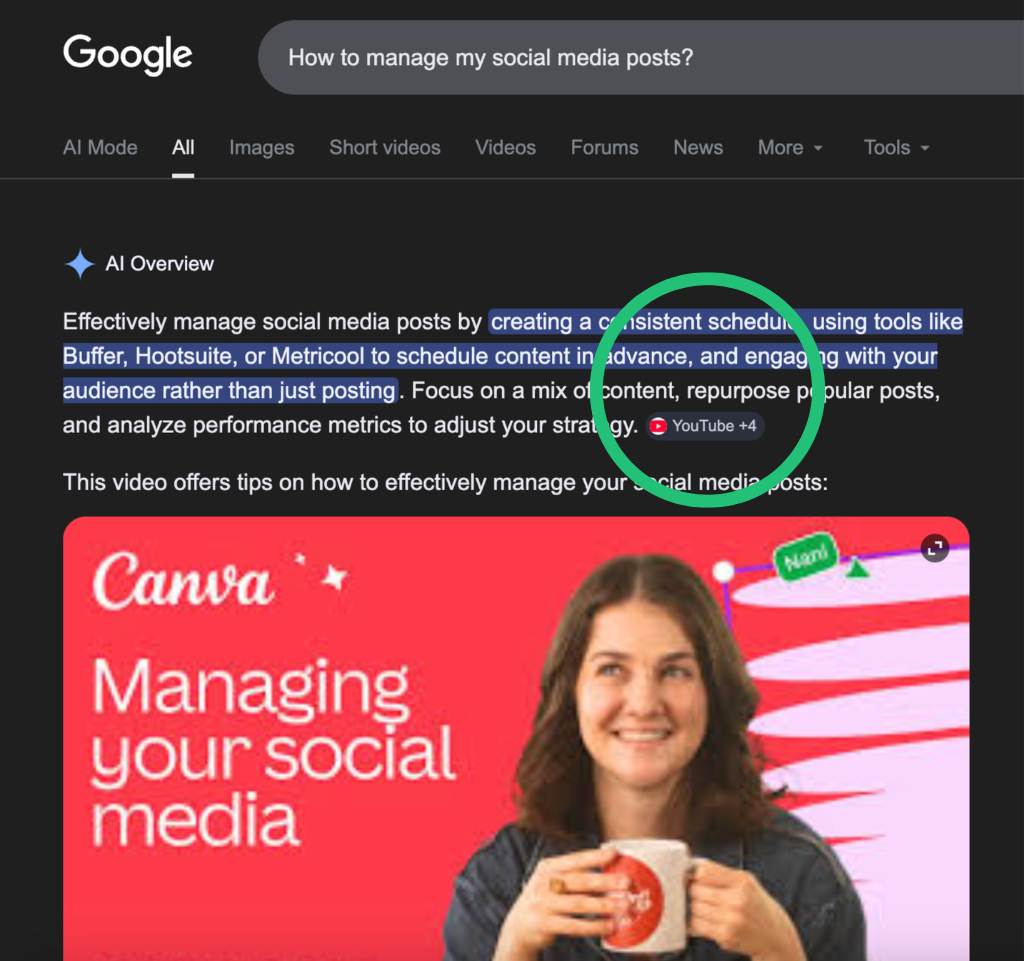

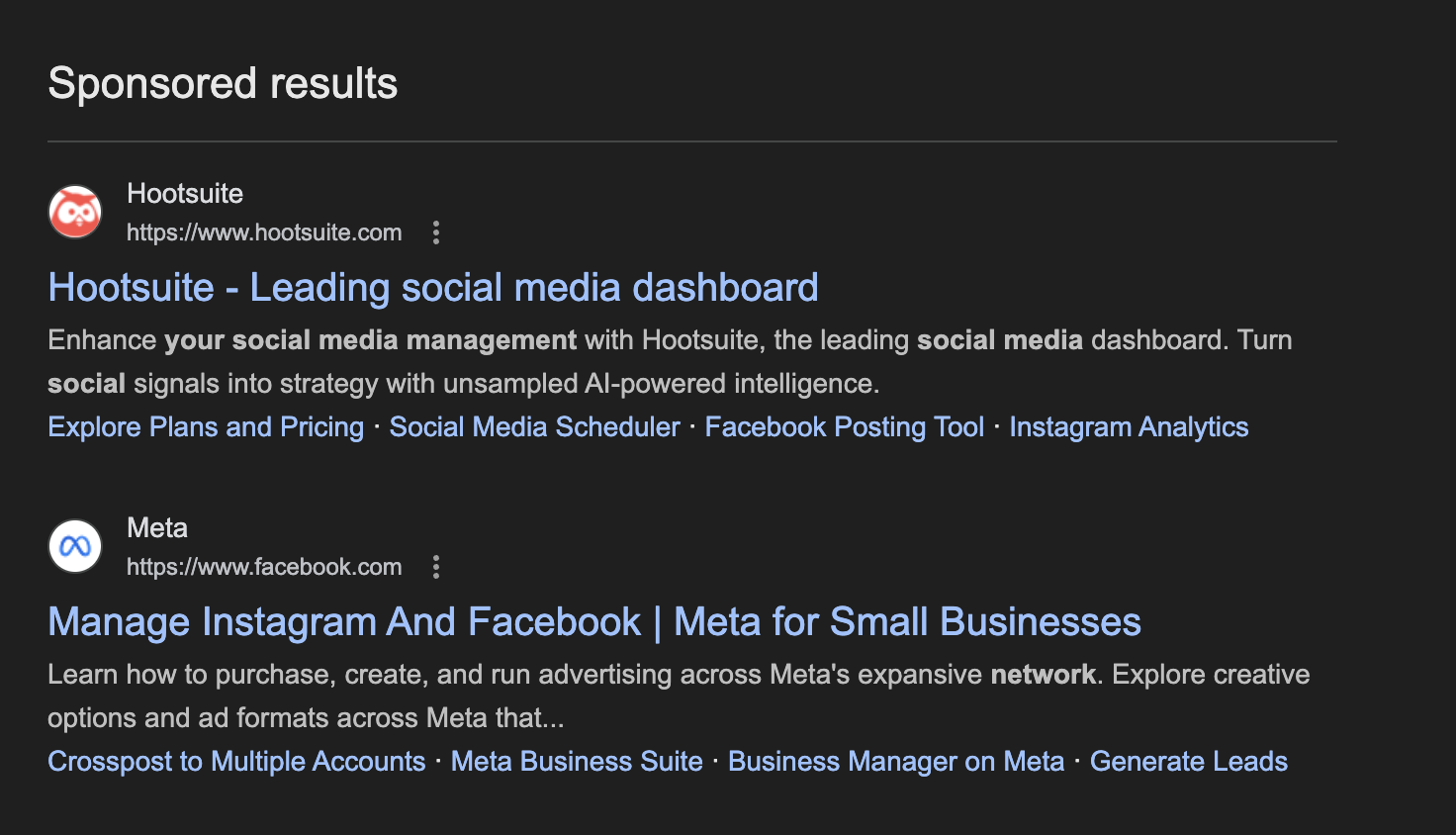

A simple example makes the point clearly. For the query "How to manage my social media posts", the AI Overview shown in the SERP did not cite the top-ranking organic result. Instead, it surfaced a Canva YouTube video and cited four sources, including two YouTube videos by creators, OptiModo (which appeared lower on page one), and Indeed (which appeared on the second page of results). Meanwhile, Hootsuite, despite ranking first organically for the query, was not cited in the AI Overview at all.

What makes the example even more instructive is the page layout itself. The AI Overview appeared before the sponsored placements, and the ads immediately below it were from Hootsuite and Meta. In other words, Hootsuite had both strong conventional visibility and paid visibility on the SERP, yet it was still absent from the AI-generated answer. That is exactly the distinction businesses need to understand: presence in the search results page does not guarantee presence in the synthesized answer layer.

The reason is that ranking and citation are optimized for different things. Search ranking optimization targets Google's crawlers and ranking algorithms. A page can perform well on these signals through strong backlink profiles, domain authority, and technical SEO, while its actual content is dense, unstructured, or written in a format that is difficult for an AI system to extract specific claims from. AI citation depends on something different: whether the content is structured clearly enough to be extractable, whether the claims are grounded in verifiable information, whether the source signals credibility, and whether the entity described in the content is defined precisely enough for an AI system to confidently attribute it.

Measuring your search rankings tells you about one channel of visibility. It tells you almost nothing about your visibility in AI-generated answers for the same queries. For businesses where research-intent traffic is significant, these are now two distinct channels worth tracking separately.

Research by Aggarwal et al., published at KDD 2024, introduced Generative Engine Optimization (GEO) as a distinct optimization problem for generative engines. Their results showed that methods such as adding citations, quotations, statistics, and improving fluency could improve visibility in generative responses, while keyword stuffing provided little to no benefit.

The practical implication: measuring your search rankings tells you about one channel of visibility. It tells you almost nothing about your visibility in AI-generated answers for the same queries. For startups where a significant share of research-intent traffic comes from AI-assisted queries, these are now two different channels worth tracking separately.

Not sure whether your startup appears in AI-generated answers for your target queries? The fastest way to find out is to test it directly: open ChatGPT or Perplexity and ask the question your ideal customer would ask before contacting a startup like yours.

For a structured assessment across five AI platforms and twenty queries, that is what a GEO Audit covers.

What AI Systems Evaluate Differently

The shift from traditional search to AI-generated answers is not only a change in interface. It is a change in what visibility depends on.

A traditional search engine is trying to rank pages. The questions it asks are about relevance, authority, and technical accessibility. Whether a user will be satisfied if they click through.

An AI answer system is trying to generate a response. That changes the judgment entirely. The question is no longer whether a page deserves a position in a list. It is whether the information on that page can be extracted, attributed, and combined with other sources without distorting the meaning. Safe to use inside an answer, not just appropriate to show in a list.

Entity clarity matters more than topical relevance alone. Traditional SEO can reward topical relevance even when a business is described loosely. AI systems are less forgiving. They need to know exactly what the business, product, founder, or concept is before they can cite it with confidence. If a startup describes itself as a "budgeting app" on its website, a "personal finance platform" on LinkedIn, and a "digital banking solution" on Crunchbase, those may seem equivalent to a human reader. To an AI system trying to cross-reference and confirm, they can introduce uncertainty about the entity's actual identity. That uncertainty weakens citation confidence. An entity-based approach to content addresses this directly.

Extractability matters as much as readability. A human can read a long, well-written page and gradually assemble meaning from it. AI systems look for specific, usable units of information: direct claims, definitions, comparisons, and steps they can lift cleanly into a generated answer. A page can be genuinely useful to a person and still underperform in AI answers. If the key point is buried in narrative prose, spread across paragraphs, or left implied rather than stated, the system has more interpretive work to do. The more interpretation required, the less reliable the extraction becomes. These extractable claim structures are sometimes called answer units: the basic building block of content optimized for AI citation.

Credibility has to be legible, not just implied. Traditional search relies heavily on signals like backlinks, domain authority, and historical strength. Those signals still matter in AI retrieval contexts, but they are not sufficient on their own. AI systems respond more strongly to signals they can read directly in the content: a named author, visible credentials, a clear review or publication date, and evidence placed adjacent to the claims it supports. Authority that has to be inferred is less useful to an AI system than authority that is stated plainly.

Consistency across external sources affects citation confidence directly.

A business is not evaluated only through its own website. It is understood through the combined picture created by its site, founder profiles, directories, reviews, media mentions, and data sources such as Wikidata. When those sources align on the same entity description, AI systems gain confidence. When they conflict, confidence drops, even if the site itself appears strong.

Visible structure matters more than hidden markup alone. Schema markup helps by telling machines what type of page or entity they are reading. But in most AI retrieval contexts, hidden structured data is only part of the picture. What produces better outcomes is when the page itself makes structure visible: clear summaries at the top, precise definitions, explicit relationships between related concepts, and navigable links between connected ideas. The strongest pages are not just machine-labeled. They are built so that both humans and AI systems can follow the logic of the content without having to infer it.

The practical consequence of these differences is that optimizing content for search rankings and optimizing it for AI citation are related tasks but not identical.

What Still Matters from SEO

It is worth being direct about this, because the framing of "SEO is changing" sometimes implies that existing SEO investment is becoming irrelevant. That is not an accurate reading of the situation.

Technical accessibility still matters. AI systems retrieve and read content that is crawlable, fast-loading, and properly indexed. A page that search engines cannot access, AI systems generally cannot access either. The technical foundation of good SEO is also the technical foundation of good AI visibility.

Authority still matters. AI retrieval systems tend to draw from sources that already carry signals of credibility and relevance. Research on AI answer systems consistently shows that domains with established authority in traditional search are more likely to be included in AI-generated answers for related queries. Strong SEO authority does not guarantee AI citation, but weak authority makes it significantly harder to achieve.

Useful, clear content still matters. The underlying goal of both SEO and AI optimization is to serve a user with genuinely useful information. Content that fails this test will underperform in both contexts. The specific format requirements differ, as AI systems benefit more from extractable structures than long-form narrative, but the baseline requirement of genuine usefulness is shared. Internal linking still matters. A coherent internal link structure helps AI agents understand the relationship between pages on a site and navigate between them. Good internal linking is part of how a site demonstrates topical depth rather than surface-level coverage.

What changes is not the value of these foundational practices, but the measurement framework and the specific content requirements that sit on top of them.

Why This Leads to GEO

The set of practices that has emerged to address the AI visibility layer of search is called Generative Engine Optimization, or GEO.

GEO is not a replacement for SEO. It is an additional layer of optimization that addresses the specific signals relevant to inclusion and citation in AI-generated answers. The two disciplines share foundational requirements, including useful content, technical accessibility, and credible authorship, but they diverge in specific content format requirements, measurement frameworks, and the importance given to external entity validation.

Some of the implementation formats now emerging in GEO, including Enhanced Entity Page, are designed specifically to make content easier for both human readers and AI retrieval systems to understand and navigate. For a full explanation of GEO, what it involves, what it requires, and how to measure it, see the main GEO guide.